3. Fine-Tuning

There are hundreds of separate parameters which influence almost every aspect of AI’s output. However, AI often produces unexpected results, and it requires continuous, iterative tweaking of these parameters to achieve an output with the highest possible production value. Alongside this, the technology and tools are evolving at lightning speed, with new techniques, training models and features becoming available on what seems like an almost daily basis. By staying on the cutting edge of generative AI, we can continue to achieve unprecedented levels of control and quality in the finished product.

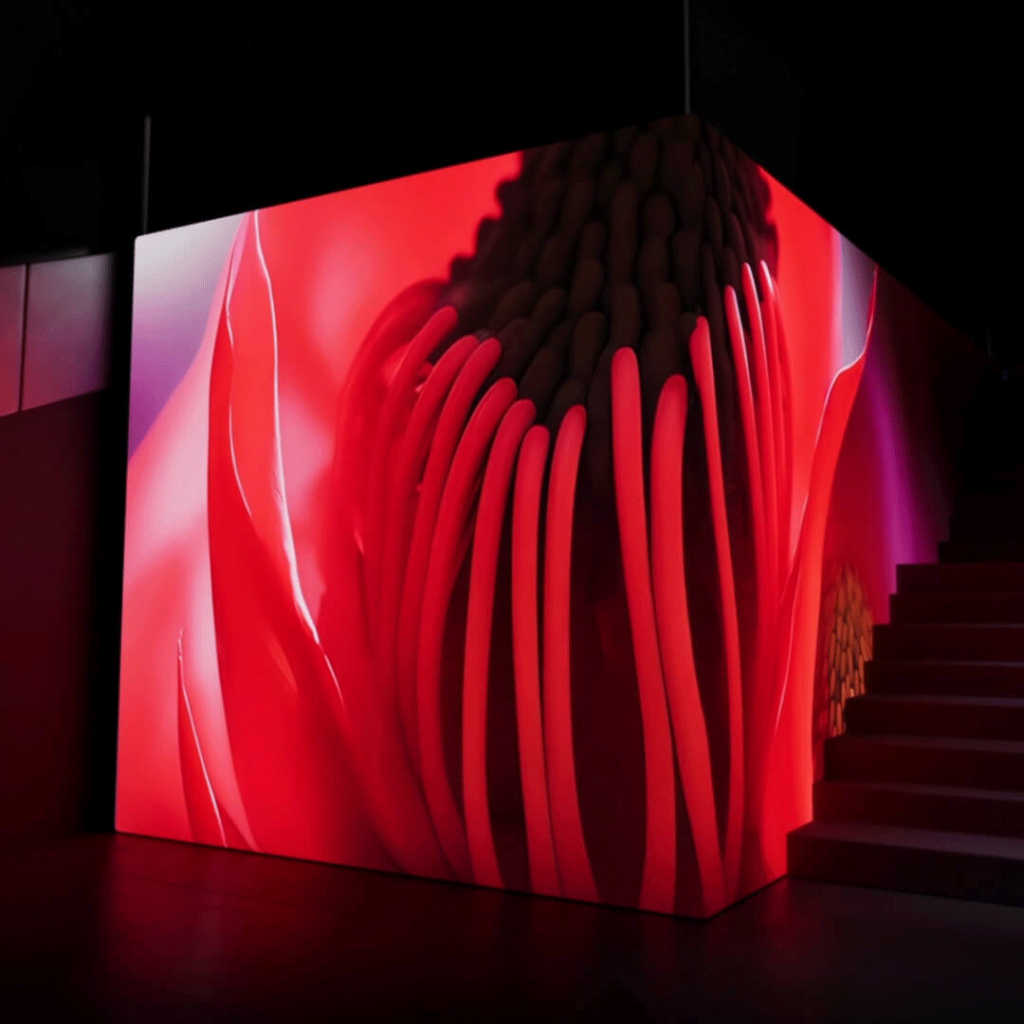

1. Embedding

Artificial intelligence algorithms, which are responsible for the generation of the art itself, work from a data set of available imagery from the world wide web; but can be augmented using image-specific training, or ‘embeddings’, which refines AI’s ‘understanding’ of an object or scene. This process of machine learning involves feeding AI with hundreds of similar, curated images, which it processes to identify patterns and significant features. The ‘knowledge’ it gains from ‘studying’ these images gives the human artists more control and precision in achieving desired output results.

2. Prompting

Once AI has been trained on a deeper understanding of certain objects through embedding, we now create written prompts to communicate to AI what we want it to create. Think of it like commissioning an artist to paint a picture. The more description in the prompt, the more control over the result. We can also use images to give AI a starting-point, which will then evolve as the algorithm takes over and ‘develops’ the final artwork. Often AI will include unwanted elements or artifacts; we use negative prompts to avoid this.